LogisticRegression--python

逻辑回归

import numpy as np

from matplotlib import pyplot as plt

def Sigmod(z):

return 1.0/(1.0+np.exp(-z))

def ComputeCost(x, y, theta, lam):

m = len(y)

h = Sigmod(np.dot(x, theta))

the = theta.copy()

the[0] = 0

temp = np.dot(the.T, the)

J = (0-np.dot(y.T, np.log(h))-np.dot((1-y).T, np.log(1-h))+lam*temp/2)/m

return J

# 映射为多项式,增加特征量

def MapFeature(x1, x2):

deg = 2

out = np.ones((x1.shape[0], 1))

for i in np.arange(1, deg+1):

for j in range(i+1):

temp = x1**(i-j)*(x2**j) # 矩阵直接乘相当于matlab中的点乘.*

out = np.hstack((out, temp.reshape(-1, 1)))

return out

def GradientDescent(x, y, theta, alpha, lam, num_iters):

m = len(y)

n = len(theta)

temp = np.matrix(np.zeros((n, num_iters))) # 暂存每次迭代计算的theta,转化为矩阵形式

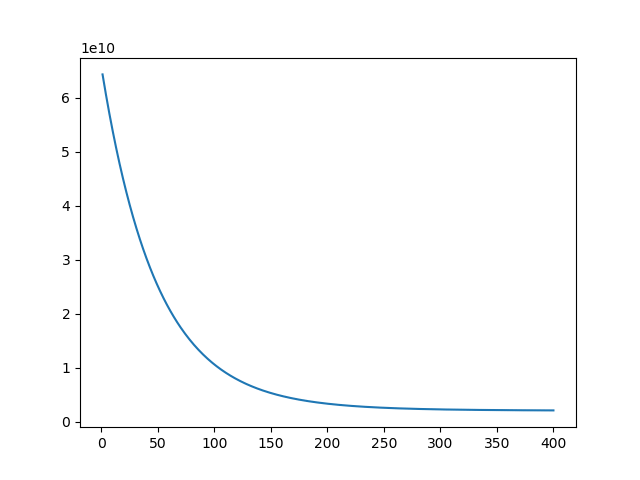

J_history = np.zeros((num_iters, 1)) # 记录每次迭代计算的代价值

for i in range(num_iters): # 遍历迭代次数

h = Sigmod(np.dot(x, theta))

# h = np.dot(X, theta) # 计算内积,matrix可以直接乘

temp[:, i] = theta-((alpha/m)*(np.dot(X.T, h-y))) # 梯度的计算

theta = temp[:, i]

J_history[i] = ComputeCost(X, y, theta, lam) # 调用计算代价函数

# print(J_history[i])

return theta, J_history

def predict(X, theta):

m = X.shape[0]

p = np.zeros((m, 1))

p = Sigmod(np.dot(X, theta)) # 预测的结果,是个概率值

for i in range(m):

if p[i] > 0.5: # 概率大于0.5预测为1,否则预测为0

p[i] = 1

else:

p[i] = 0

return p

# 画决策边界

def plotDecisionBoundary(theta, X, y):

pos = np.where(y == 1) # 找到y==1的坐标位置

neg = np.where(y == 0) # 找到y==0的坐标位置

# 作图

plt.figure(figsize=(15, 12))

plt.plot(X[pos, 0], X[pos, 1], 'ro') # red o

plt.plot(X[neg, 0], X[neg, 1], 'bo') # blue o

#plt.title(u"决策边界", fontproperties=font)

#u = np.linspace(30,100,100)

#v = np.linspace(30,100,100)

u = np.linspace(-1, 1.5, 50) # 根据具体的数据,这里需要调整

v = np.linspace(-1, 1.5, 50)

z = np.zeros((len(u), len(v)))

for i in range(len(u)):

for j in range(len(v)):

z[i, j] = np.dot(MapFeature(u[i].reshape(1, -1),

v[j].reshape(1, -1)), theta) # 计算对应的值,需要map

z = np.transpose(z)

# 画等高线,范围在[0,0.01],即近似为决策边界

plt.contour(u, v, z, [0, 0.01], linewidth=2.0)

# plt.legend()

plt.show()

if __name__ == "__main__":

data = np.loadtxt('data2.txt', delimiter=',', dtype=np.float64)

lam = 0.1

alpha = 0.1

X = data[:, 0:-1]

y = data[:, -1].reshape(-1, 1)

X = MapFeature(X[:, 0], X[:, 1])

theta = np.zeros((X.shape[1], 1))

print(ComputeCost(X, y, theta, lam))

theta, J_his = GradientDescent(X, y, theta, alpha, lam, 5000)

print(theta)

p = predict(X, theta) # 预测

# 与真实值比较,p==y返回True,转化为float

print(u'在训练集上的准确度为%f%%' % np.mean(np.float64(p == y)*100))

X = data[:, 0:-1]

y = data[:, -1]

plotDecisionBoundary(theta, X, y) # 画决策边界

逻辑回归数字识别

import numpy as np

from matplotlib import pyplot as plt

import scipy.io as spio

def Sigmod(z):

return 1.0/(1.0+np.exp(-z))

def ComputeCost(x, y, theta, lam):

m = len(y)

h = Sigmod(np.dot(x, theta))

the = theta.copy()

the[0] = 0

temp = np.dot(the.T, the)

J = (0-np.dot(y.T, np.log(h))-np.dot((1-y).T, np.log(1-h))+lam*temp/2)/m

return J

# 映射为多项式,增加特征量

def MapFeature(x1, x2):

deg = 2

out = np.ones((x1.shape[0], 1))

for i in np.arange(1, deg+1):

for j in range(i+1):

temp = x1**(i-j)*(x2**j) # 矩阵直接乘相当于matlab中的点乘.*

out = np.hstack((out, temp.reshape(-1, 1)))

return out

def GradientDescent(X, y, theta, alpha, lam, num_iters):

m = len(y)

n = len(theta)

temp = np.matrix(np.zeros((n, num_iters))) # 暂存每次迭代计算的theta,转化为矩阵形式

J_history = np.zeros((num_iters, 1)) # 记录每次迭代计算的代价值

for i in range(num_iters): # 遍历迭代次数

h = Sigmod(np.dot(X, theta))

# h = np.dot(X, theta) # 计算内积,matrix可以直接乘

temp[:, i] = theta-((alpha/m)*(np.dot(X.T, h-y))) # 梯度的计算

theta = temp[:, i]

J_history[i] = ComputeCost(X, y, theta, lam) # 调用计算代价函数

# print(J_history[i])

return theta, J_history

def predict(X, theta):

m = X.shape[0]

p = np.zeros((m, 1))

p = Sigmod(np.dot(X, theta)) # 预测的结果,是个概率值

for i in range(m):

if p[i] > 0.5: # 概率大于0.5预测为1,否则预测为0

p[i] = 1

else:

p[i] = 0

return p

# 显示100个数字

def display_data(imgData):

sum = 0

'''

显示100个数(若是一个一个绘制将会非常慢,可以将要画的数字整理好,放到一个矩阵中,显示这个矩阵即可)

- 初始化一个二维数组

- 将每行的数据调整成图像的矩阵,放进二维数组

- 显示即可

'''

pad = 1

display_array = -np.ones((pad+10*(20+pad), pad+10*(20+pad)))

for i in range(10):

for j in range(10):

display_array[pad+i*(20+pad):pad+i*(20+pad)+20, pad+j*(20+pad):pad+j*(20+pad)+20] = (

imgData[sum, :].reshape(20, 20, order="F")) # order=F指定以列优先,在matlab中是这样的,python中需要指定,默认以行

sum += 1

plt.imshow(display_array, cmap='gray') # 显示灰度图像

plt.axis('off')

plt.show()

def OneAll(X, y, nums, alpha, lam, num_iters):

m, n = X.shape

theta_a = np.zeros((n+1, nums))

X = np.hstack((np.zeros((m, 1)), X))

class_y = np.zeros((m, nums))

for i in range(nums):

class_y[:, i] = np.int32(y == i).reshape(1, -1)

for i in range(nums):

init_theta = np.zeros((n+1, 1))

theta, _ = GradientDescent(

X, class_y[:, i].reshape(-1, 1), init_theta, alpha, lam, num_iters)

theta_a[:, i] = theta.reshape((1, -1))

return theta_a.T

def predict_oneVsAll(all_theta, X):

m = X.shape[0]

p = np.zeros((m, 1))

X = np.hstack((np.ones((m, 1)), X)) # 在X最前面加一列1

h = Sigmod(np.dot(X, np.transpose(all_theta))) # 预测

'''

返回h中每一行最大值所在的列号

- np.max(h, axis=1)返回h中每一行的最大值(是某个数字的最大概率)

- 最后where找到的最大概率所在的列号(列号即是对应的数字)

'''

p = np.array(np.where(h[0, :] == np.max(h, axis=1)[0]))

for i in np.arange(1, m):

t = np.array(np.where(h[i, :] == np.max(h, axis=1)[i]))

p = np.vstack((p, t))

return p

if __name__ == "__main__":

num_lables = 10

alpha = 0.1

lam = 0.1

num_iters = 1000

data = spio.loadmat("data_digits.mat")

X = data['X'] # 获取X数据,每一行对应一个数字20x20px

y = data['y']

m, n = X.shape

rand = [np.random.randint(0, m) for x in range(100)]

# display_data(X[rand, :])

theta_a = OneAll(X, y, num_lables, alpha, lam, num_iters)

p = predict_oneVsAll(theta_a, X) # 预测

# 将预测结果和真实结果保存到文件中

res = np.hstack((p, y.reshape(-1, 1)))

np.savetxt("predict.csv", res, delimiter=',')

print(u"预测准确度为:%f%%" % np.mean(np.float64(p == y.reshape(-1, 1))*100))

All articles in this blog are licensed under CC BY-NC-SA 4.0 unless stating additionally.

Comment

GitalkValine